Description

Machine Learning (ML) Pipelines are used to automate the ML training processes (Feature Engineering, Train Mode, Register Model, Deploy Model) and to perform batch inferencing (Note that realtime inferencing is done through an AKS endpoint and Azure Functions; see How and Where to Deploy).

In the Azure ML SDK, there is a Pipeline Class (ParallelRunStep Class for batch Inference) that is used to create the pipelines. A full list of Pipeline Steps is Steps Package. Below are the most common ones:

- AutoMLStep Class

- If Data is in an AML Dataset

- Forecasting: MLonAzure Github Pipeline to train with AutoML

- Regression: Product Team Notebook

- Classification: Product Team Notebook

- If Data is not in an AML Dataset:

- Regression: Passing data into the AutoML Step Example: Product Team Notebook

- If Data is in an AML Dataset

- DataTransferStep

- MLonAzure Blog: Pipelines: DataTransferStep

- MLonAzure GitHub Repository AML Data Transfer

- DatabricksStep

- EstimatorStep

- HyperDriveStep

- MpiStep

- PythonScriptStep

- MLonAzure GitHub: Pipeline Python and R

- RScriptStep

- MLonAzure GitHub: Pipeline Python and R

- ParallelRunStep

- MLonAzure Blog: MLOps: Training and Scoring Models in Parallel

- Azure AI Blog: Train and Score Hundreds of Thousands of Models

Note: Each Step in a Pipeline runs on its own Compute Target which provides the flexibility of having multiple training clusters with the appropriate configurations for the Step being performed. For example, if a PythonScriptStep is running a Tensorflow script and requires GPUs then a cluster with GPUs can be used while a second Step might be another PythonScriptStep that is doing a much simpler python script can use a smaller, maybe even one node cluster.

Major Steps for a creating a Training Pipeline

- Construct Pipeline by defining multiple steps each with an appropriate compute target to run on.

- Test Pipeline through Experiment.Submit(your_pipeline)

- Publish Pipeline (Note: a new Pipeline Id is created everytime it is published, therefore Step (4) creates an EndPoint that stays constant)

- Publish a PipelineEndPoint

- Automate the Pipeline run either through a Pipeline Schedule, Azure DevOps or Azure Data Factory.

Walkthrough and Code Samples

- Product Team GitHub Pipelines

- MLonAzure: Github Pipeline to train with AutoML

- MLonAzure: Pipelines: DataTransferStep Article and GitHub Repository: AML Data Transfer

- AI Blog: on Batch Inferencing

- Product Team: GitHub Batch Inference

- Product Team: Channel 9: Batch Inference Video

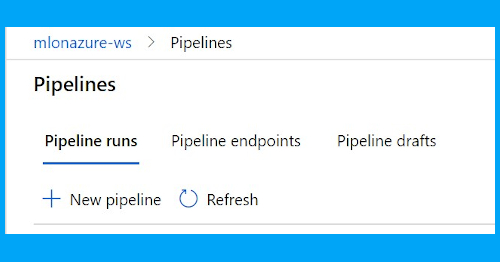

Pipelines in Studio

Comments are closed